Time is a tricky concept. While working on my latest project Recurring Tasks, I was reminded of its intricacies. I’ve done my best to summarize what I’ve learned here in the simplest way.

The simplest way to think about time is a straight line. It started at some point and runs off to infinity. Almost all

software uses midnight on January 1, 1970, Greenwich Mean Time as the reference point for when time started. This is

also known as the epoch. Thus, the epoch millisecond 0 is January 1, 1970 (GMT), and the epoch

millisecond 1665360000000 is October 10, 2022 (GMT). Calculations are simple: any point in time can be described as

the number of milliseconds since “the start” of time.

For humans, thinking of a point in time as a 10 or more digit number is inconvenient and difficult to remember. The most widely used system for time in the world right now is the Gregorian calendar, based on where the Earth is during its revolution around the Sun divided into twelve months, January to December. When using this method, the date and time are referenced in a “local” sense. October 10, 2022 at 3:00 PM does not refer to the same point in physical time everywhere on Earth; 3:00 PM in New York City is 12:00 PM in Los Angeles.

To disambiguate a local datetime, an offset from Coordinated Universal Time (UTC) (the successor to Greenwich Mean

Time) is commonly used.

Coordinated Universal Time is effectively the local time for places at the 0 degree longitude. To convert between local

times in places in different time zones, you convert from one local time to UTC and then from UTC to the other local

time. For example, 15:00:00 UTC-04:00 (with a four-hour offset behind UTC) is 3:00 PM on the east coast of

the United States during the summer months and corresponds to 12:00:00 UTC-7:00 (with a seven-hour offset behind UTC),

12:00 PM on the west coast of the United States. Both of them correspond to 19:00:00 UTC-00:00, the base UTC time with

no offset. To reference a physical point in time in relation to civil time, speaking in terms of UTC is the easiest

since the 0 epoch millisecond was at precisely 00:00 on January 1, 1970, and UTC does not jump for daylight savings.

Thus, each time in UTC maps to a specific epoch millisecond, and the conversion is just a matter of arithmetic.

But the conversion becomes trickier if we want to add or subtract time.

Imagine you are creating a calendaring application that allows a user to pick a local time (let’s say 3:00 PM), and the app triggers a reminder at that same time every day. The strategy you choose is to run a job in the background continuously checking every minute whether the current epoch millisecond (the only concept of time that computers understand) is equal to the user input. How should we store the user input?

One approach is to store the chosen local time as an offset from UTC. After all, once you translate to UTC, it’s rather easy to convert to epoch milliseconds. Let’s imagine the user is in New York City - we store the time as 15:00:00 UTC-04:00. At some point in the future, the application checks the machine time, converts it to 19:00:00 UTC, checks whether any of the reminders correspond to 19:00:00 UTC, finds 15:00:00 UTC-04:00, and the reminder is triggered! But what happens when the autumn daylight savings time is crossed? Suddenly, 3:00 PM in New York City is actually 15:00:00 UTC-05:00, but the database still sees it as 15:00:00 UTC-04:00 and the reminders start triggering an hour early. One potential solution is to detect daylight savings time changes and automatically update the database with the new offsets, but how do we tell the difference between a New York City 15:00:00 UTC-04:00 that will change with daylight savings and an Atlantis 15:00:00 UTC-04:00 that does not follow daylight savings at all? And how do we handle all of these rules for every offset in the world?

The problem with offsets is that they’re subject to change based on the laws of different regions. Some may follow

daylight savings, some may not, and some may not even adhere to the conventional longitudinal-line based offsets.

In come location-based time zones. Instead of specifying the offset from UTC, location-based time zones correspond to a

national region that historically has its own rules about the offset from UTC and for daylight savings, for

example America/New_York or Asia/Kolkata. The most common source of that information is the tz database. This

database maps a location to the rules about its offsets from UTC (how these rules are applied are out of scope of this

article, but needless to say, they’re tricky). By only

storing a local time and a time zone and using this database, you can confidently convert the local times to

physical times.

Going back to our earlier example and knowing what we know now, the implementation we should go with is to store the

local time (3:00 PM) along with the location-based time zone. Now when the application runs and does its checks every

minute, it can convert the entry in the database into the correct UTC-based offset from the rules in the tz database

and use that information to compare to the epoch millisecond.

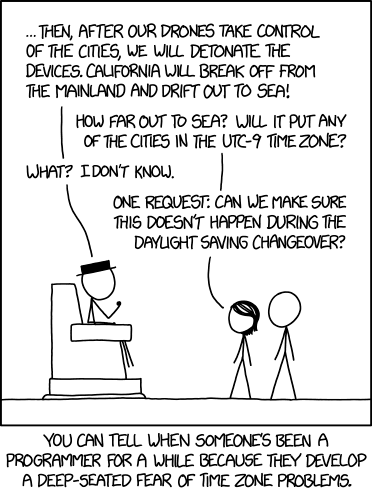

Some shrewd readers may catch that I never explained how one would determine the date to attach to the local time and time zone when converting it to a UTC-based offset. There are lots of nuances that I’ve omitted to make the overall concept digestible, but I will go over more specifics about how I implemented my Recurring Tasks in a future post. Until then, I leave you with this XKCD.